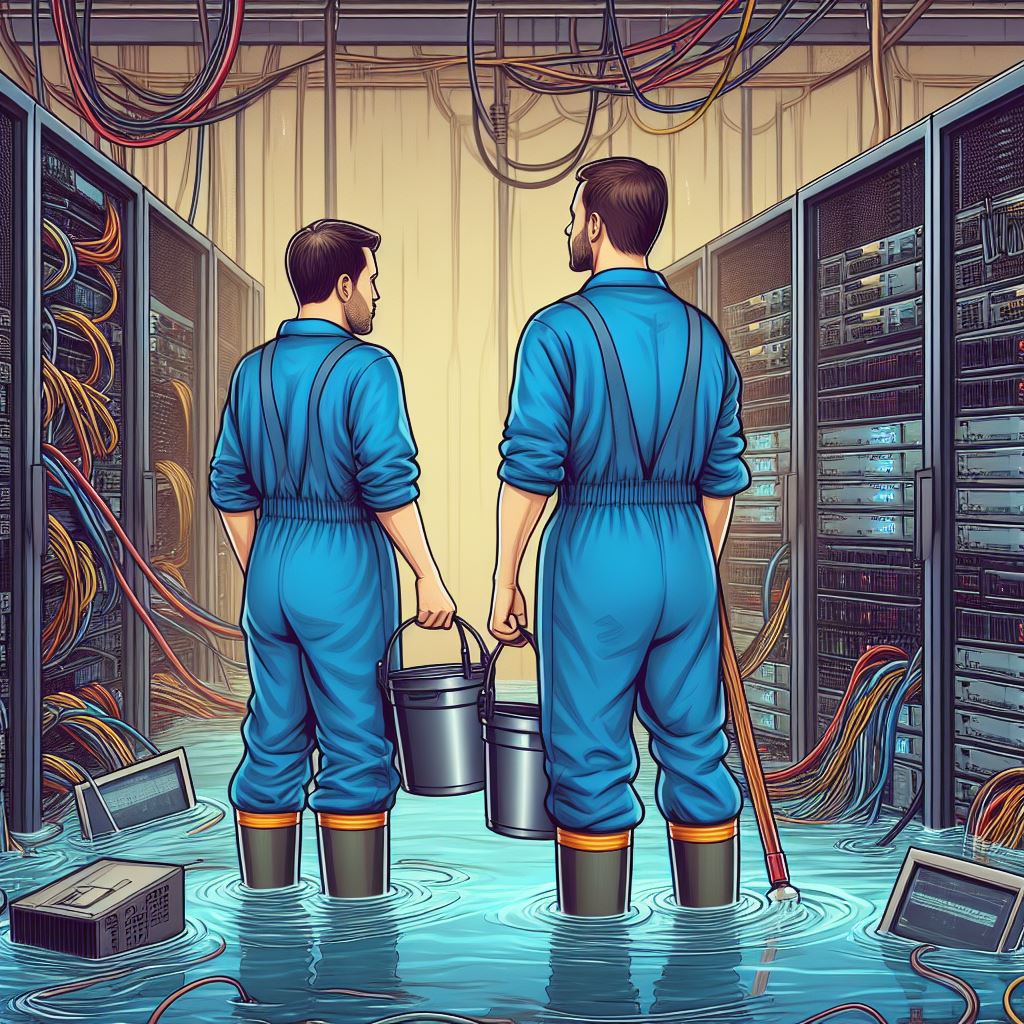

‘Vulnerabilities: You Can’t Stop The Flood, But You Can Avoid Drowning’

Maintained by NIST, the National Vulnerability Database currently lists 231,000 vulnerabilities. Last year, 25,000 were added at a rate of around 2,000 every month.

The technology underpinning the world’s largest entities is perforated, to say the least, and capabilities stretched.

How organisations address this problem in a structured way using processes and tools such as Continuous Threat Exposure Management (CTEM), was the subject of a debate amongst security leaders at an event in Central London, who settled on a number of key points.

A fragmented environment

There was agreement that, compounded by the sprawling nature of the modern technical environment, traditional approaches to vulnerability management are underwater. Across cloud, on-prem, multi-cloud, legacy, remote, supply chain, OT, IT and more – the attack surface continues to stretch ever further.

The same could be said of security teams. Spread thinly across a plethora of vulnerability scanners, threat intel feeds, patching tools and spreadsheets – the next breach is a small, overlooked, piece of code away. For an example, look no further than Equifax. Given two months and an unpatched version of Struts, attackers managed to inflict damage to the tune of $575m and growing.

Focus for efficiency

Those present agreed that the elephant in the room is that the problem has become too much to manage. People, processes and technology simply can’t scale mitigations with the pace and volume necessary using traditional methods.

The answer lies in focussing on the vulnerabilities which pose the most risk and giving these priority. This is supported by data. Studies show that organisations can only remediate 5 – 20% of vulnerabilities in their environment and, in addition, only 5% are actively exploited in the wild.

Identifying which ones these might be, however, is no simple task. For this to be successful – every individual organisation’s specific view of risk must be taken into account.

Achieving this requires first aggregating data from across the entire environment to gather context. Infrastructure data, network devices, firewall configurations, CMDB records and identity information, for example, can all be used to create a detailed digital twin.

With this data as a backdrop, threat intelligence can be overlaid to help security teams answer the questions critical to understanding risk in context. For example, which vulnerabilities are being actively exploited? Are these on exposed assets in my environment? Those present agreed that answering such questions brings focus. In large environments, this can quickly whittle down millions of risk points, to thousands.

Mapping is important

An important part of this exercise which elevates it beyond just ‘counting vulnerabilities’ is understanding the technical dependencies of critical business processes. Companies regulated by DORA and the operational resilience expectations of the FCA may already have visibility of this for compliance purposes, but for others the complexity of this task can be daunting.

Security leaders agreed that mapping the web of related assets, systems and processes they secure is vital for understanding how an attack may unfold. Doing so shines a light on the paths that might be used for lateral movement and where single points of failure exist – therefore highlighting where effort should be focussed. This should also include supply chains.

Never assume

As with any cybersecurity initiative, it was agreed that success relies on a continually updated picture on how the external threat environment applies to your specific organisation.

Vulnerabilities shouldn’t be discounted simply because they have aged. While high profile CVEs have a definite window of popularity with adversaries – this doesn’t mean the risk is negated once it has fallen out of favour amongst the herd. What could hurt you six months ago can still hurt you today if it remains unpatched.

Similarly, it is not uncommon for low CVSS rating threats to be exploited purely because their innocuous nature means they are off the radar for security teams. It is dangerous to assume.

Mitigation: the promise and the reality

The point was made that, even with a rigorous CTEM programme, patching is no panacea. In large organisations – identifying, assessing and testing software fixes prior to deployment in a way which keeps pace with risk can be a herculean task in its own right.

Against this backdrop, having an asset inventory with a clear view of dependencies and attack paths becomes even more important for directing work streams which mitigate the largest portion of risk. Security teams also need to adopt alternate mitigations such as firewall rules changes and network segmentation.

Ultimately, like much in cybersecurity, vulnerability management needs to adapt as it tips into the age of overwhelming volume. Without a clear strategy to prioritise – security teams and outmoded tool sets swim against a considerable undertow. Only with focus can they rise above the tide.